Pursuit of in-memory computing has long been an active area with recent progress showing promise. Just how in-memory computing works, how close it is to practical application, and what are some of the key opportunities and challenges facing in-memory computing comprised the core of IBM researcher Evangelos Eleftheriou’s keynote at the annual HiPEAC conference held virtually this week.

The HiPEAC – High Performance Embedded Architecture and Compilation – project began as one of Europe’s Horizon 2020 projects intended to advance HPC. Coincident with this year’s virtual conference, the organization released HiPEAC Vision 2021, which perhaps paradoxically but hopefully, suggests Europe “seize the opportunity presented by the influence of the COVID-19 pandemic” to develop user-centered IT systems that prioritize security and convenience.

The HiPEAC Vision 2021 document is freely available for download. This year’s conference also included an Industry Day, keynoted by AMD’s Brad McCredie discussing the path to exascale (HPCwire will have coverage later). Not surprisingly much of the agenda looked at AI writ large.

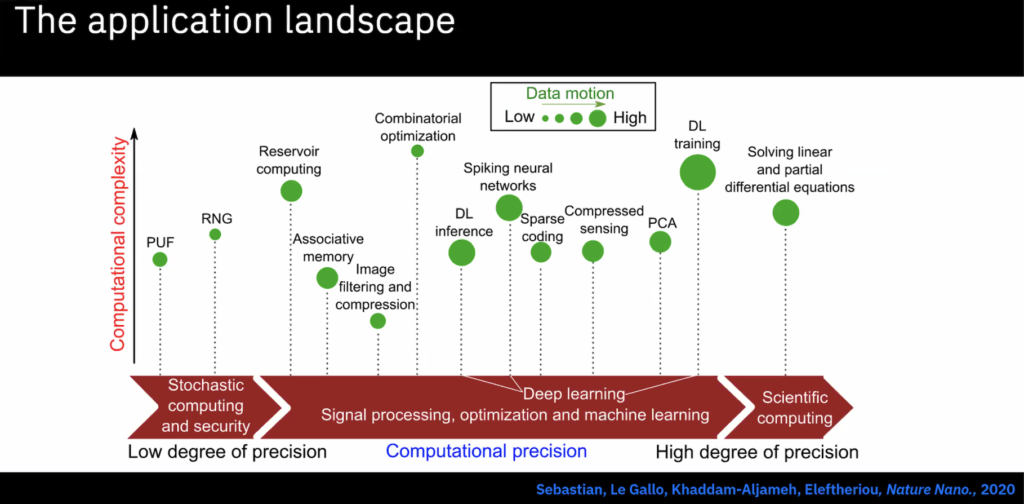

Eleftheriou’s talk dug into in-memory computing and the big problem it tackles, which is data transfer overhead in terms of latency and energy consumption. His snapshot of the landscape looked at how phase change memory and more familiar SRAM/DRAM technology can be used as alternatives to traditional von Neumann compute architectures. He emphasized how deep learning techniques can be implemented using in-memory computing.

“In the last five-plus years, AI has become synonymous with deep learning. And the progress in this area is fast and dramatic. We are at a point where, for example, image and speech recognition can produce accuracies close to or better than the human brain. Most of the fundamental algorithmic developments around deep learning go back decades,” said Eleftheriou, an IBM Fellow working at the IBM’s Zurich Research Laboratory.

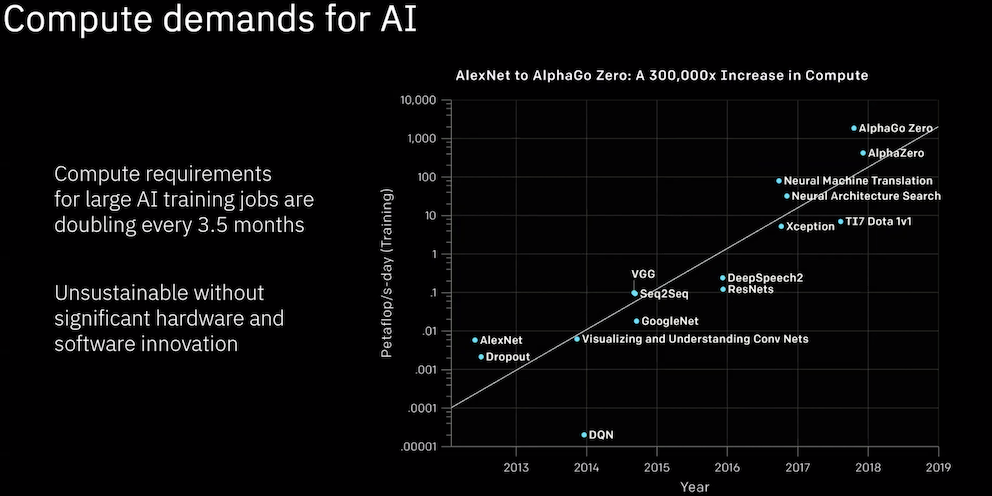

The key challenge in computing today, he said, is the mushrooming of compute requirements caused by AI adoption. “For really large AI training jobs, [compute requirements] are doubling every 3.5 months. Clearly, this is unsustainable without fundamental hardware and software innovation,” he said.

As it turns out matrix vector multiplication is the dominant function. “[F]or the three most common AI applications, such as speech recognition, natural language processing, and computer vision, which involve RNNs, LSTM’s and CNNs, the matrix vector multiplications constitute 70 to 90% of the total deep learning operations. The rest of the operations are simple element wise operations with single or multiple word operands,” Eleftheriou said.

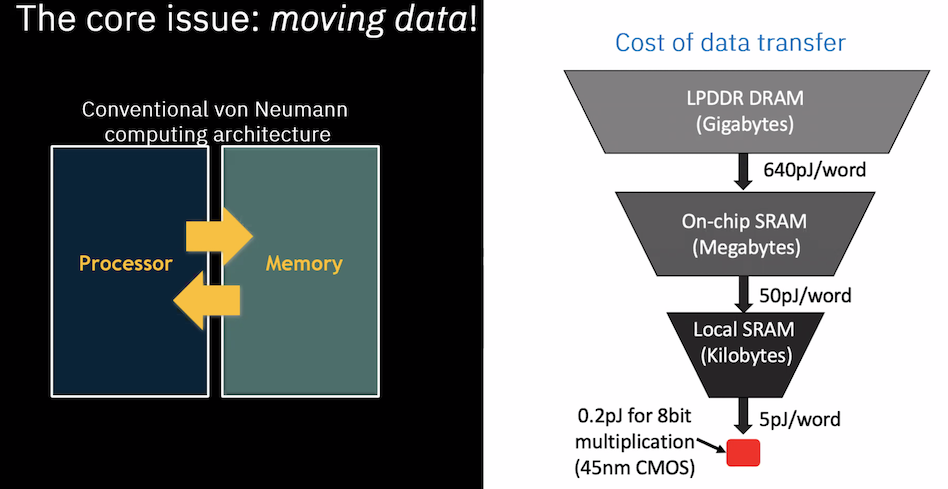

Broadly, the core issue is moving data between the processor and the memory in conventional von Neumann computer architecture. There’s a cost for every data transfer that in-memory computing can reduce.

“If I want to transfer data from a DRAM, the energy consumption is 640 picojoules per word, then if I go down to the SRAM, it is 50 picojoules and when I have a small local SRAM, it’s about 5 picojoules. So compare these energy consumptions for data transfer to the cost of an a bit multiplication. So it is only 0.2 picojoules per eight bits, which is a factor of about 3,000 less than transferring data from DRAM. Although this study was back in 2014, for the 45 nanometer CMOS technology node, there are recent studies that show that the problem gets exacerbated as you move down the lithography node,” said Eleftheriou.

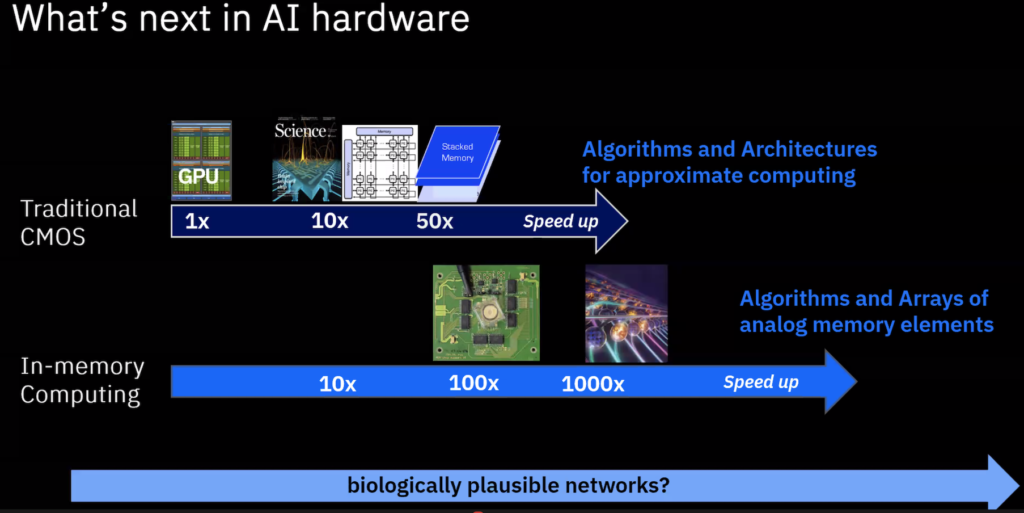

Eleftheriou, like others, argue the need to solve the data transfer and management problem is driving opportunities 1) to develop future systems that minimize data movement for performing computation directly-or-near where the data resides, and 2) to introduce novel computational primitives, like matrix vector multiplication that facilitate the deep learning workloads. Such innovations are under way. He noted 3D XPoint memory technology is opening new horizons for running faster and with lower power requirements on many applications.

In-memory computing will also drive innovation, he said.

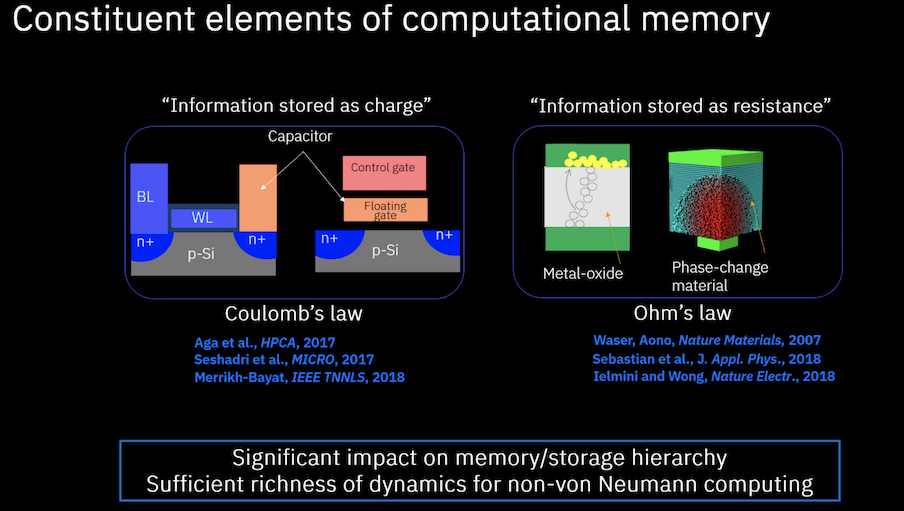

“Memory is not just a place where you park your data, but an active participant in computing. And here (slide below) we see the various incarnations of memory on the on the left-hand side, we have the classical you know DRAM, SRAM, Flash, where information is stored as charge. In DRAM, we have an explicit capacitor. In flash, we have the floating gate, if I want to do computation, we use the Coulomb’s Law. On the right-hand side, we have this emerging memory technology under the name memristors, where information is stored as resistance

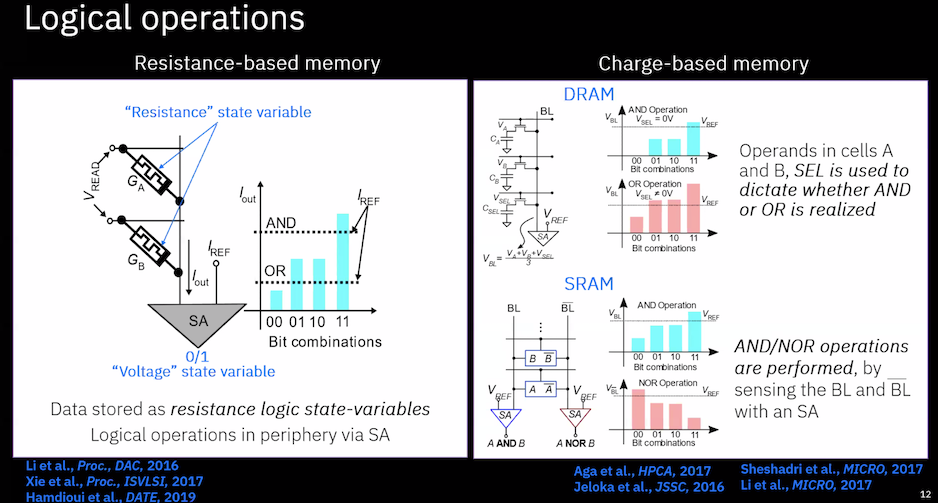

The new approaches, he said, “possess sufficient richness of dynamics for non von Neumann computing. There is a range of in memory logic and arithmetic operations that can be performed using both charge-based and resistance-based memory devices. This slide shows examples of logical operations. On the left-hand side, we can see schematics of bitwise AND and OR operations using two memory devices. Data are stored as resistance logic state value. Then with the appropriate choice of the current thresholds in the sense amplifier, it’s possible to implement AND and OR logical operations. On the right-hand side, we can see schematics of again bitwise AND and OR operations using DRAM and SRAM cells. In the case of DRAM, the operands are stored in the cells A and B and then there is a third DRAM cell that plays the role of a selector which used to decide whether AND or OR is realized.”

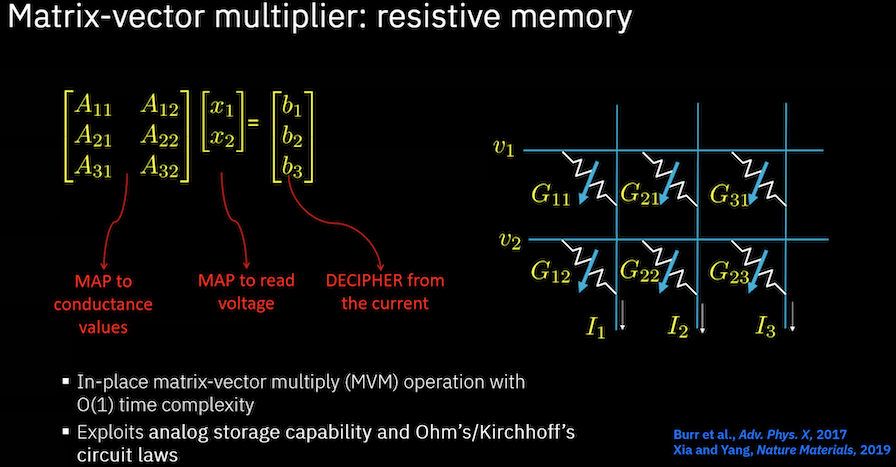

Using these techniques, Eleftheriou said it is possible to implement matrix multiply operations that are critical and abundant in DL. The example shown below is using resistive memory.

“Let’s assume now that I want to perform a matrix vector multiplication (slide below). On the left-hand side, you see a matrix, three rows two columns, multiplied by a two-dimensional vector that resolves as a three-dimensional vector. What we do is, we map the values – the elements of the matrix – into conductance values on the crossbar array; in other words we exploit the analog storage capability of the resistive memory. Then we map the two-dimensional vector into the read voltage at the input of the crossbar array. And the result automatically appears as current at the columns of the crossbar array. Therefore, I can have in-place matrix vector multiply with time complexity of 01 time complexity. And you can do the same thing for the transposed matrix and vector multiplication; in this case you just apply the voltages at the columns, and you get the current at the rows,” explained Eleftheriou.

Many challenges remain. Eleftheriou noted a new software stack would be needed. “You have different computational primitives. you have matrix vector multiplications. you have a different control, you know, that you need when you implement a data flow engine, different compilers are needed, and so on,” he said. Drift remains a problem for resistive memory, he added, but efforts are ongoing to cope with that.

Practical devices are still a ways off. “There are many startups out there working on it. I believe it will be a couple of years until we see products on the market. That’s my guess,” he said.

There was a good deal more detail in Eleftheriou’s presentation. If a video become available HPCwire will update this article with a link.

Slides sourced from Evangelos Eleftheriou’s (IBM) HiPEAC keynote

Feature image: IBM PCM chip