Artificial neural networks learn better when they spend time not learning at all

Depending on age, humans need 7 to 13 hours of sleep per 24 hours. During this time, a lot happens: Heart rate, breathing and metabolism ebb and flow; hormone levels adjust; the body relaxes. Not so much in the brain.

"The brain is very busy when we sleep, repeating what we have learned during the day," said Maxim Bazhenov, Ph.D., professor of medicine and a sleep researcher at University of California San Diego School of Medicine. "Sleep helps reorganize memories and presents them in the most efficient way."

In previous published work, Bazhenov and colleagues have reported how sleep builds rational memory, the ability to remember arbitrary or indirect associations between objects, people or events, and protects against forgetting old memories.

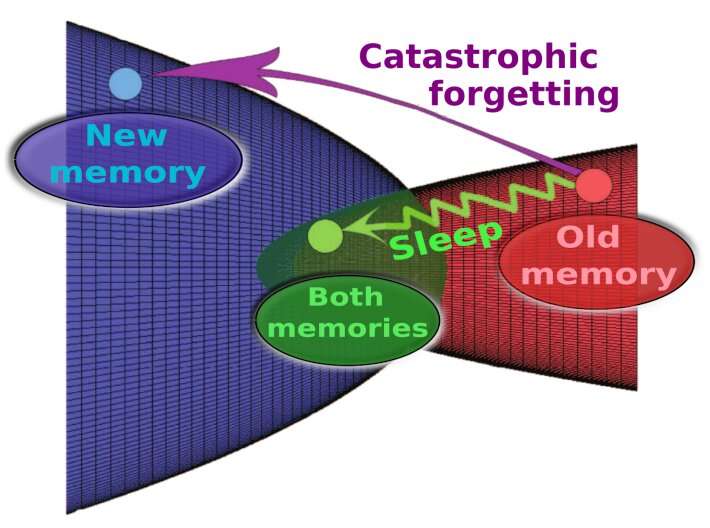

Artificial neural networks leverage the architecture of the human brain to improve numerous technologies and systems, from basic science and medicine to finance and social media. In some ways, they have achieved superhuman performance, such as computational speed, but they fail in one key aspect: When artificial neural networks learn sequentially, new information overwrites previous information, a phenomenon called catastrophic forgetting.

"In contrast, the human brain learns continuously and incorporates new data into existing knowledge," said Bazhenov, "and it typically learns best when new training is interleaved with periods of sleep for memory consolidation."

Writing in the November 18, 2022 issue of PLOS Computational Biology, senior author Bazhenov and colleagues discuss how biological models may help mitigate the threat of catastrophic forgetting in artificial neural networks, boosting their utility across a spectrum of research interests.

The scientists used spiking neural networks that artificially mimic natural neural systems: Instead of information being communicated continuously, it is transmitted as discrete events (spikes) at certain time points.

They found that when the spiking networks were trained on a new task, but with occasional off-line periods that mimicked sleep, catastrophic forgetting was mitigated. Like the human brain, said the study authors, "sleep" for the networks allowed them to replay old memories without explicitly using old training data.

Memories are represented in the human brain by patterns of synaptic weight—the strength or amplitude of a connection between two neurons.

"When we learn new information," said Bazhenov, "neurons fire in specific order and this increases synapses between them. During sleep, the spiking patterns learned during our awake state are repeated spontaneously. It's called reactivation or replay.

"Synaptic plasticity, the capacity to be altered or molded, is still in place during sleep and it can further enhance synaptic weight patterns that represent the memory, helping to prevent forgetting or to enable transfer of knowledge from old to new tasks."

When Bazhenov and colleagues applied this approach to artificial neural networks, they found that it helped the networks avoid catastrophic forgetting.

"It meant that these networks could learn continuously, like humans or animals. Understanding how human brain processes information during sleep can help to augment memory in human subjects. Augmenting sleep rhythms can lead to better memory.

"In other projects, we use computer models to develop optimal strategies to apply stimulation during sleep, such as auditory tones, that enhance sleep rhythms and improve learning. This may be particularly important when memory is non-optimal, such as when memory declines in aging or in some conditions like Alzheimer's disease."

Co-authors include: Ryan Golden and Jean Erik Delanois, both at UC San Diego; and Pavel Sanda, Institute of Computer Science of the Czech Academy of Sciences.

More information: Ryan Golden et al, Sleep prevents catastrophic forgetting in spiking neural networks by forming a joint synaptic weight representation, PLOS Computational Biology (2022). DOI: 10.1371/journal.pcbi.1010628

Journal information: PLoS Computational Biology

Provided by University of California - San Diego